This article is published as chapter of the ThingsCon Report ‘The State of Responsible IoT; Small Escapes from Surveillance Capitalism’, December 2019

Things become networks, autonomous things with their own agency as result of the developments in artificial intelligence. The character of things is changing into things that predict, that have more knowledge than the human where it interacts with. Things are building a new kind of relations with humans, predictive relations. What is the consequence of these predictive relations on the interaction with humans? Will the things that know more than we humans do, help us understand the complex world, or will the things start to prescribe behavior to us without we even know? What is the role of predictive relations in the design practice of the future designer?

This notion of predictive relations is linked to earlier research in the research program PACT (Partnerships in Cities of Things) and the work in the Connected Everyday Lab by Elisa Giaccardi and others. The notion that we will have affective things that draw conclusions from the interaction things have with humans, and combine these with buildup knowledge from the network, is illustrated in the provocation by Iohanna Nicenboim and Elisa Giaccardi called Affective Things.[1]

In a paper (M. L. Lupetti, Smit, & Cila, 2018) we described some near future scenarios how things connect to existing data and cloud services in the smart city and act in concert with people. In a few specific scenarios we sketched how these relations may play out. From a pizza delivery pod that know so much of the background information in combination with historical data on orders, that it can become an affective thing, starting a dialogue on the situation of the person ordering the pizza. She used to order always 2 pizzas but lately the orders became one pizza and combining with other behavior the conclusion is drawn the relationship with the boyfriend of the girl is ended. The delivery pod takes here a new role as good friend, a shoulder to cry on. A role that can do no harm if it stays within the domain of that one interaction. The links to other behavior in other situations indicates though that this is not the actual situation.

Another example describes a future public transport situation, based on a system of smaller transport pods that have a flexible route planning for going from A to B. This means that the pods don’t follow fixed routes and the travel time is severely reduced. But there is a catch. The system is not only flexible in the journey mapping, the planning is also taken into account who is travelling and including the social status of the person traveling. The service is there for planning its routes via a combination of actual efficiency in the route and the priorities. Consequence is that the journey time is hard to predict for the individual traveler. Creating more transparency in the decision making is key in building citizen robotic systems that are trusted by human citizens (M. Lupetti, Bendor, & Giaccardi, 2019).

The fundament of this future society

What is defining these systems to happen? The first driver is the digitizing of our world in all aspects. We have deconstructed our cities with increments of buildings or structures into a layered model where the basic layer is the physical layer. On top of that we have a digital layer that is connected to databases and computing capabilities. Entities can be physical or digital, and are using the digital layer to be assembled to a state in a service. This is the fluid assemblage (Redström & Wiltse, 2018). Not only can these assemblages be defined at the moment of use or interact, also the physical layer functions differently. Instead of setting the stage it is a blank sheet with the right components. Kitchin & Dodge described this situation as a Code/space (Kitchin & Dodge, 2011), a space where the digital computing layer has become crucial in defining the functionalities. No computing layer means no functionality. Something that already can be seen in ultimo at an airport. In the deconstructed city the services offered are totally open for interpretation but at the same time the control of that layer is more and more limited to a selective number of players.

The thing itself is changing too into an intelligent artefact. It connects with an existing network, collect real-time data and act proactively. And most interesting, it has a social behavior. These things take their own role in our society, things are citizens.

Predicting and prescripting

That things are becoming networked objects behaving as fluid assemblages is the start. These things can adapt to the data in the network and the interaction with other things and humans. This creates a situation that the thing has more knowledge on possible future developments than the human can have based on the combination of observation and anticipation. Anticipation is here based on knowledge from experience or learned interpretations. If we let loose of a ball we understand it will fall to the ground. When that same ball is an autonomous operating ball[2] it can connect to the network and things start to predict outcomes, it means that it will feed forward on situations we did not anticipate.

The more complex the behavior of the thing is the more anticipation on expected results is steering the interaction. The more complex the thing the more depending we will be on the predictions made.

In the future we will shift continuously between the simulated future and the now. Think on simple examples as the weather app that is predicting rain based on radar data and sensors is ruling our perception of the exception of becoming wet when going outside more than the judgement of the real rain situation. And more specific the example of a Tesla that is predicting an accident and taking the initiative to brake before the first accident is really happening[3].

We are entering here an interesting domain of tension; what is ruling, the predictive system that helps us to understand the complex world, or a system that is prescribing our behavior?

If the things will form a framework for our decisions, will we transfer agency to the system of things? And if we do so, will that limit our own agency? This is no question; we are already put more trust in systems to keep knowledge and remove this knowledge from our memories. Google is the ultimo assistant. And this is an example what dependency entails. The filter bubble has become a recognized concept. What we think is true is depending on what tools like Google present to us.

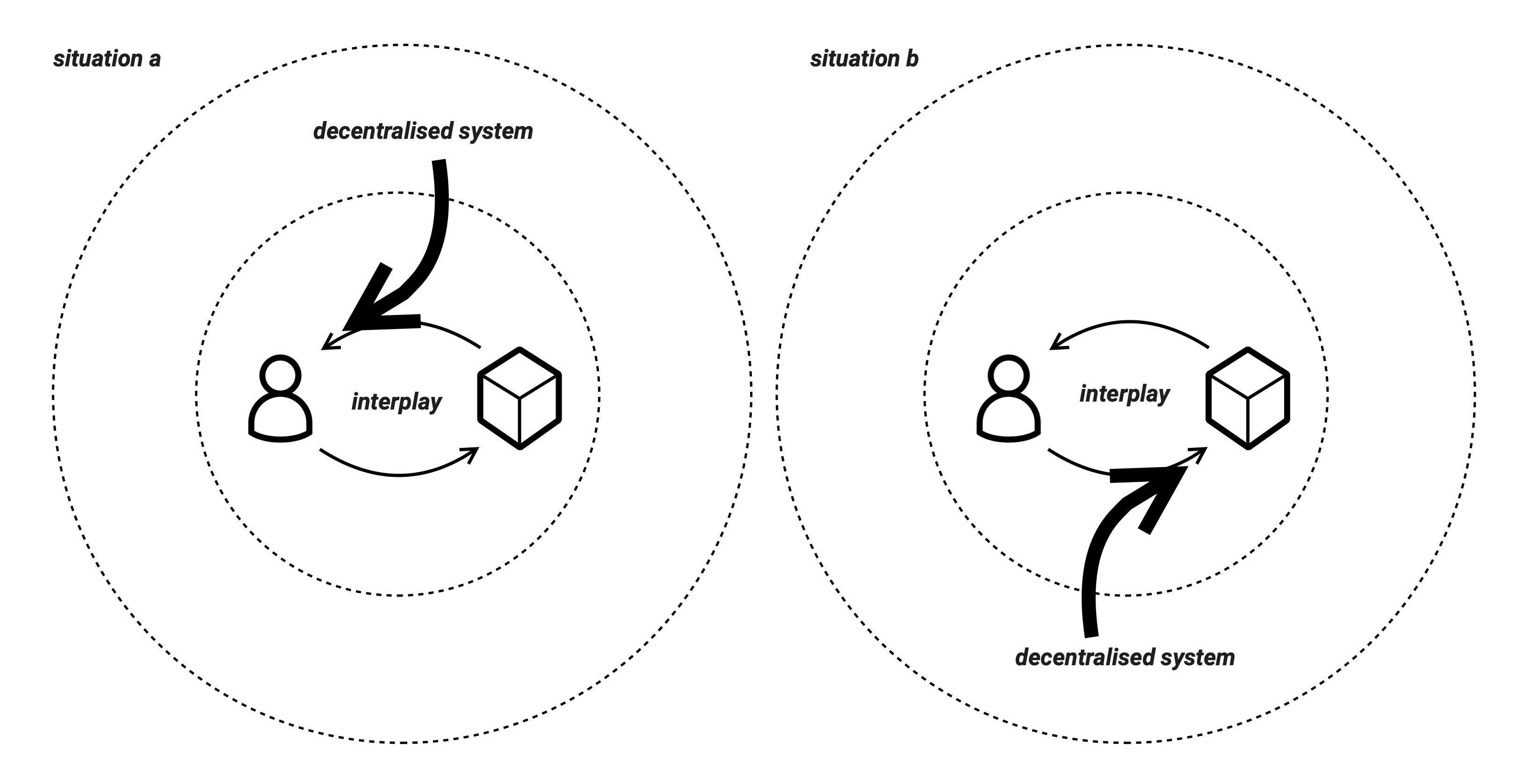

As soon as we start to experience this disconnect from real world and (pre)scripted life alienation is a possible outcome. We feel disconnected from the devices as the working is more defined in the decentralised system than in the direct working. This even can cause physical unease (Bean, 2019).

A new design space

The interplay of predictions and actions creates a complex interrelated design space. Predictive behavior shapes our mental model on the acting of the thing. At the same time our actions shape the digital model of the thing. In a first model of predictive relations the interplay of the human and the autonomous operating thing is deconstructed into a combination of pattern recognition, interactions with a digital representation of the thing and knowledge from probable futures generated by similar instances in the network.

For designers of physical things, the span of control is already extending from the physical instance to the digital service that is incorporated or unlocked via the physical artefact. With the notion of predictive relations there is a need for designing contextual rule-based behavior. The choices made in the design defines the distribution of agency between system, thing and human. Systems of things form an entity on its own and the design is both influencing the system as the things, as it is influencing the interplay of the thing and the human. To deal with this complexity the default acting might be to automate the system behavior with machine learning and AI. But what does that mean for our position in that system. Can we keep a set of responsible rules? We like to work with known knowns and known unknowns[4]. But what is the consequence for the way we design if we need to do this for unknown knowns?

Notes

[1] Read more on the project at https://iohanna.com/Affective-Things-More-than-Human-Design, last accessed 6 November 2019

[2] Art project https://dispotheque.org/en/le-23eme-joueur last accessed 6 November 2019

[3] More on the specific event at https://www.engadget.com/2016/12/28/tesla-autopilot-predicts-crash (last accessed 30 July 2019)

[4] Referring here to a infamous speech by Donald Rumsfeld, US Secretary of Defense speaking in 2002 in a news briefing, see https://en.wikipedia.org/wiki/There_are_known_knowns, last accessed 6 November 2019

References

Bean, J. (2019). Nest Rage. Interactions, 26(May-June 2019), 1.

Kitchin, R., & Dodge, M. (2011). Code/Space, Software and Everyday Life: The MIT Press.

Lupetti, M., Bendor, R., & Giaccardi, E. (2019). Robot Citizenship: A Design Perspective.

Lupetti, M. L., Smit, I., & Cila, N. (2018). Near future cities of things: addressing dilemmas through design fiction. Paper presented at the NordiCHI, Oslo.

Redström, J., & Wiltse, H. (2018). Changing Things : The Future of Objects in a Digital World. London: Bloomsbury Visual Arts.

[1] Referring here to a infamous speech by Donald Rumsfeld, US Secretary of Defense speaking in 2002 in a news briefing, see https://en.wikipedia.org/wiki/There_are_known_knowns, last accessed 6 November 2019

Leave a comment